Web Optimization: Can you repeat your test results?

This week, I’m deep in the heart of the Big Apple (also known as enemy territory if you share my love for the Red Sox) for Web Optimization Summit 2014.

Day 1 has delivered some fantastic presentations and luckily, I was able to catch Michael Zane, Senior Director Online Marketing, Publishers Clearing House, in his session that covered “How to Personalize the Online Experience to Increase Engagement.”

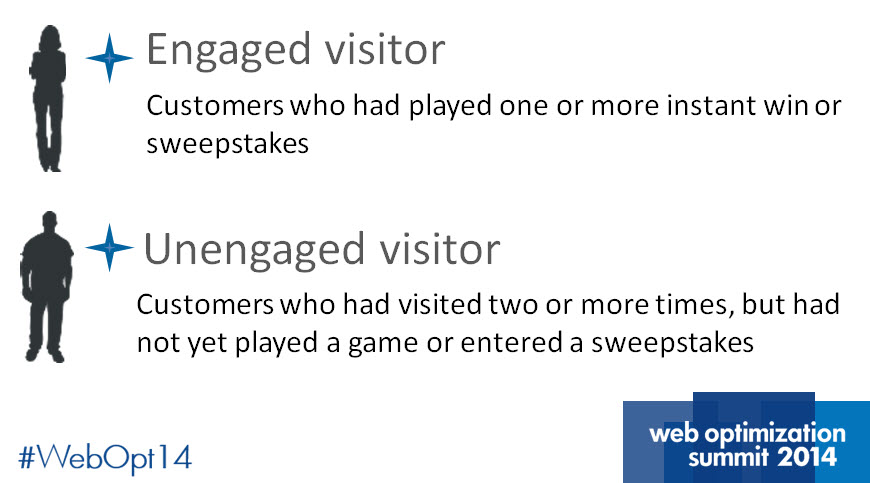

Michael’s take on personalization starts with a key distinction between visitors to PCH he mentioned early on.

Michael’s take on personalization starts with a key distinction between visitors to PCH he mentioned early on.

“You have to define your personas,” he said. “It only made sense for us to take a simplistic approach at first and then dig deeper.”

According to Michael, the challenge rests in driving engagement in unengaged visitors. To help the company’s engagement efforts, Michael and his team turned to testing and optimization.

In this MarketingSherpa Blog post, we’ll take a look at some of his team’s testing efforts including one key aspect that often goes unspoken.

Before we get started, let’s look at the research notes for some background information on the test.

Objective: To convert unengaged visitors into engaged customers.

Primary Research Question: Will a simple, but attention-grabbing, header convince unengaged visitors to play a game?

Test Design: A/B split test

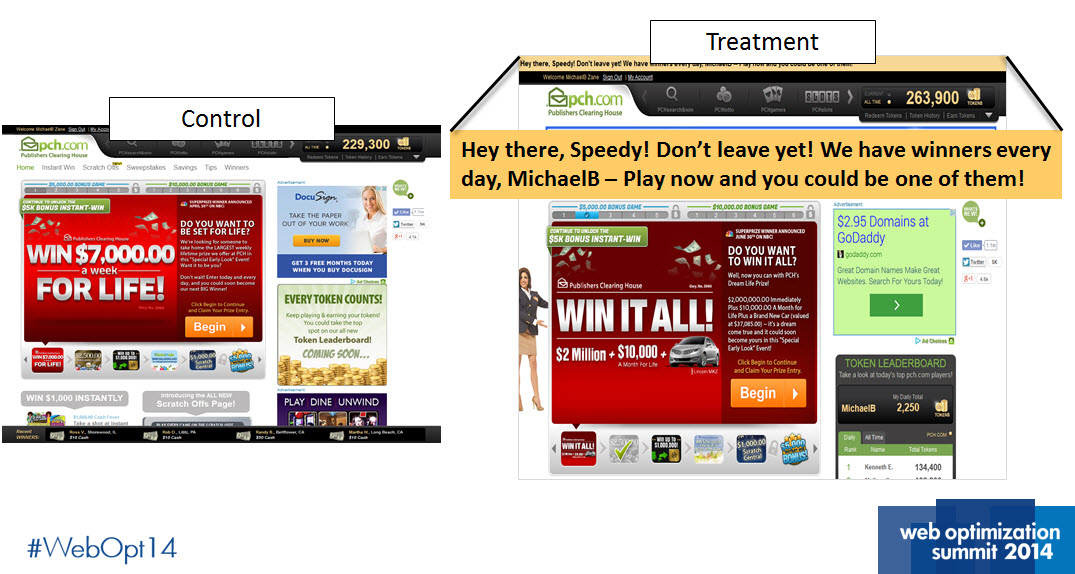

Experiment #1. Side by side

Michael and his team decided to test a header they hypothesized would encourage visitors to play a game.

“The text in the treatment was innocent at the top of the page and it wasn’t really competing with the other content,” Michael said.

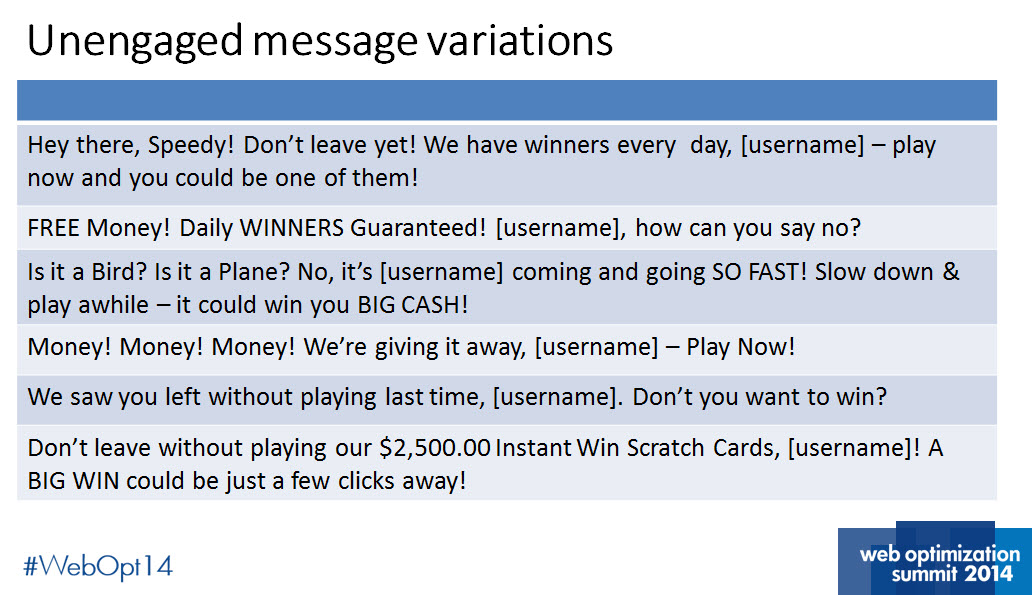

The team also used a variety of messages in the experiment to help them dial into their core value proposition.

Results

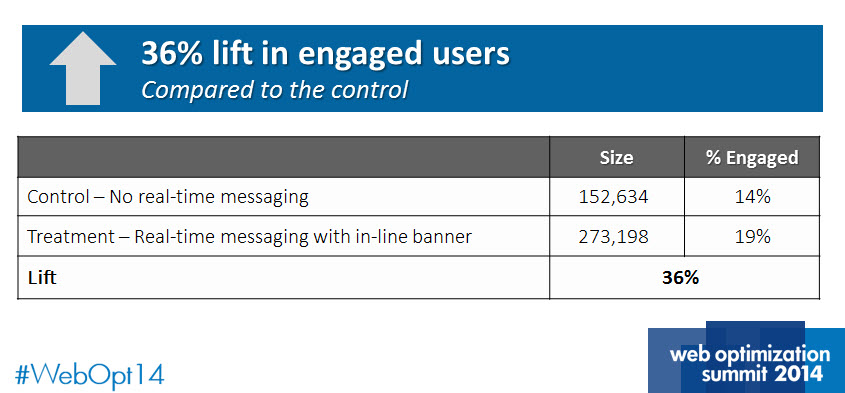

The treatment outperformed the control by a relative difference of 36%. There are plenty of marketers that would be thrilled by these results.

However, Michael made an interesting point here that should be mentioned a lot more than it usually is.

“The initial test showed strong results, but they are only valuable if it can be repeated,” Michael said.

Experiment #2. Testing for the two-peat

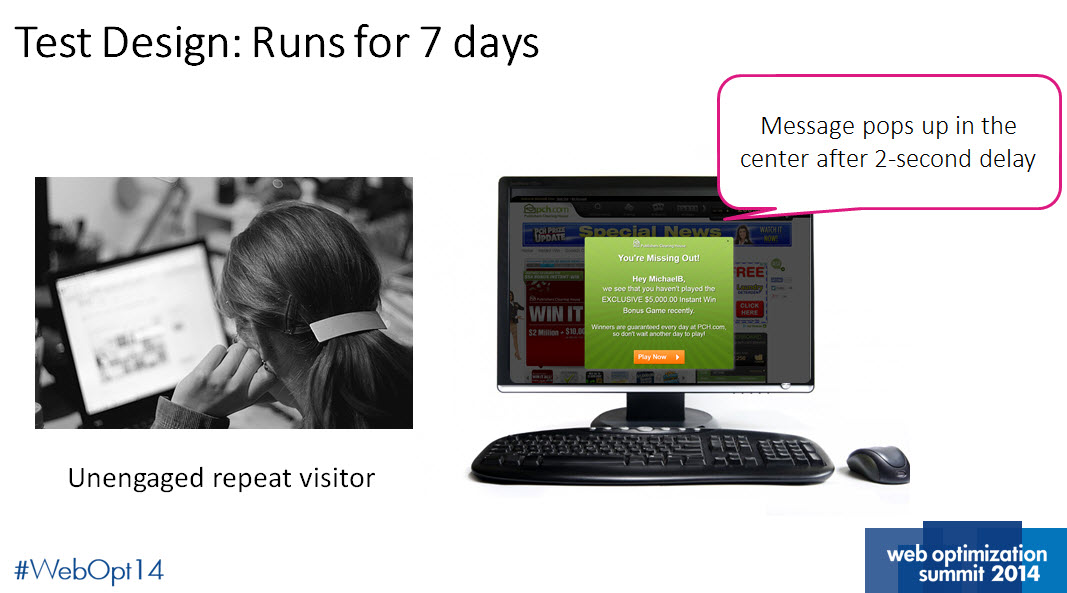

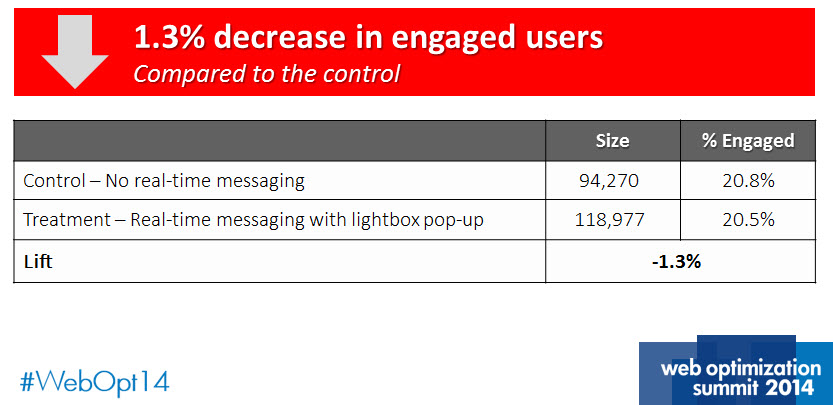

Michael’s team set up a second test to continue to build on their engagement success. For this experiment, the team devised a lightbox pop-up that interrupted users after two seconds on the site.

Results

After only four days, Michael and his team concluded that the new lightbox approach was decreasing conversion.

“Having this failure helped us validate the metrics,” Michael said. “We didn’t want to rely just on third-party metrics. Not every test is a winner.”