Social media measurement is in its early phases, and marketers need to decide whether to parse the social media cacophony, much like a radio astronomer, gathering as much data as possible to discern the signs of life or selectively focus on a small, but sufficiently meaningful set of metrics.

The word “sufficient” can span a wide spectrum, and determining what is sufficient is perhaps the question that marketers must answer.

In some sense, you really don’t have a choice. How much data you can afford to collect and analyze is limited by your organization’s budgetary and human resources. If you are not already collecting enough data for “big” analytics (”Approach 1” that I described in my last blog post), it makes sense to get the most out of what you have now relatively quickly, and in the process learn what additional data you need.

I spend a significant amount of time in digital photography, and my friends often ask me for advice on what camera to buy as they are getting more “serious.” My answer is always the same—first, get the most out of the camera you have. Once you start appreciating what your camera lacks, then you can start thinking about investing into those specific features.

In the same sense, getting started is critical. Reading blog posts will not give you a concrete sense of social media (SoMe) measurement until you get your own hands on a monitoring tool—even if you start by manually listening to conversations using RSS feeds, Twitter, Google Alerts, and the like.

Second, you need to clearly identify your objectives. In our own research project on SoMe measurement with Radian6, I am leaning toward focusing on best practices for specific scenarios—e.g., a Facebook company page—to deal with manageable amounts of data and produce results on a realistic timeline.

So for those not quite ready for “big” analytics, let’s take a look at a quick start approach…

Approach 2: A microscope, not a radio telescope

Commit to a set of metrics you’ll be accountable for, and stick with them. This is a far more pragmatic approach that does not require that every kind of data is available to be measured. If it appears that this approach is not scientific, that is not the case. While focusing on a smaller number of metrics does not paint the whole picture the way that the first approach does, trending data over time can be highly valuable and meaningful in reflecting the effectiveness of marketing efforts.

Taking into account the marginal time, effort, and talent required to process more data, it makes economic sense to focus on a smaller number of data points. With fewer numbers to crunch, marketers armed, for example, only with data available directly from their social media management tools, can calibrate their marketing efforts against this data to build actionable KPIs (key performance indicators).

During Social Media Week, NYC-based Social2b’s Alex Romanovich, CMO, and Ytzik Aranov, COO, presented a straightforward measurement strategy rooted in established, if not venerated, marketing heuristics, such as Michael Porter’s Value Chain Analysis. Their core message is to appreciate that different social media KPIs will be important not only to different companies and industry segments, but “these KPIs also have to align well with more traditional metrics for that business – something that the C-Level and the financial community of this company will clearly understand.”

Alex stresses that “the entire ‘value chain’ of the enterprise can be affected by these metrics and KPIs – hence, if the organization has a sales culture and is highly client-centric, the entire organization may have to adapt the KPIs used by the sales organization, and translated back to the financial indicators and cause factors.

This approach should immediately make sense to marketers, even without any knowledge of statistical analysis.

Social2B focuses not only on the marketing, but also on the customer service component of SoMe ROI, and here is Ytzik’s short list of steps for getting there:

- Define the social media campaign for customer service resolution

- Solve for the KPI and projections

- Apply Enterprise Scorecard parameters, categories

- Solve for risk, enterprise cost, growth, etc.

- Map to social media campaign cost

- Solve for reduction in enterprise costs through social media

- Justify and allocate budget to social media

An important element here is the Enterprise Scorecard—another established (though loosely defined) management tool that is often overlooked even by large-scale marketing organizations. Given the novelty of SoMe, getting it into the company budget requires not only proving the ROI numerically, but also speaking the right language. Ytzik’s “C-level Suite Roadmap” might appear simple, but it requires that corporate marketers study up on their notes from business school:

- Engage in Compass Management (managing and influencing your organization vertically and horizontally in all directions)

- Define who owns the Web and social media within the company

- Identify the enterprise’s value chain components

- Understand the enterprise’s financial scorecard

Again, no statistics here—it is understood that analysis will be required, but these tools will put you in a good position when the time comes to present your figures.

How to get started

Finally, I wanted to get as pragmatic as possible to help marketers get started and not get stuck in a data deluge. Here are Social2B’s top 10 questions to ask yourself before you scale your SoMe programs:

- Is my organization and my executive management team ready for social media marketing and branding?

- Does everyone treat social media as a strategic effort or as an offshoot of marketing or PR/communications?

- Where in the organization will social media reside?

- Will I be able to allocate sufficient budget to social media efforts in our company?

- How will social media discipline be aligned with HR, Technology, Customer Service, Sales, etc.?

- What tools and technologies will I need to implement social media campaigns?

- Will ‘social’ also include ‘mobile’?

- How will we integrated SoMe marketing campaigns with existing, more ‘traditional’ marketing efforts?

- How much organizational training will we need to implement in integrating ‘social’ within our enterprise?

- Are we going to use ‘social’ for advertising and PR/Communications? What about ‘disaster recovery’ and ‘reputation management’?

Related Resources

Social Media Measurement: Big data is within reach

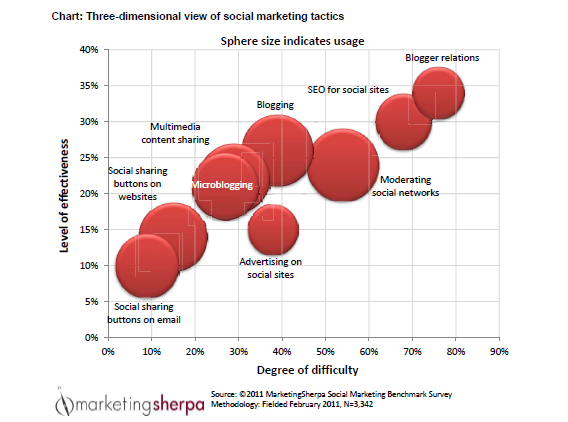

2011 Social Marketing Benchmark Report – Save $100 with presale offer (ends tomorrow, April 30)

Always Integrate Social Marketing?

Inbound Marketing newsletter – Free Case Studies and How To Articles from MarketingSherpa’s reporters